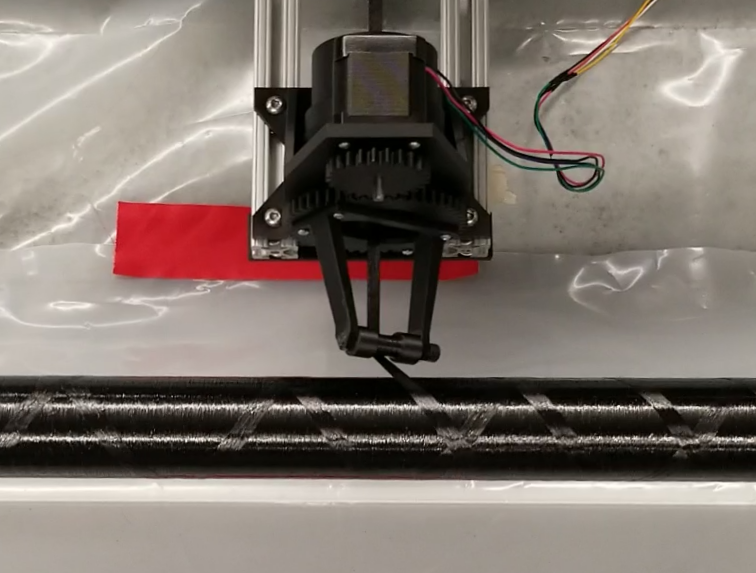

Contraption: DIY Filament Winder

One of my main projects for 2022 was developing a custom filament winder and accompanying wind planning script. A filament winder is a kind of CNC machine that makes composite tubes by wrapping a ribbon of fiber around a mandrel. Unsurprisingly, I intend to use mine to fabricate carbon fiber parts for rockets.

Read More

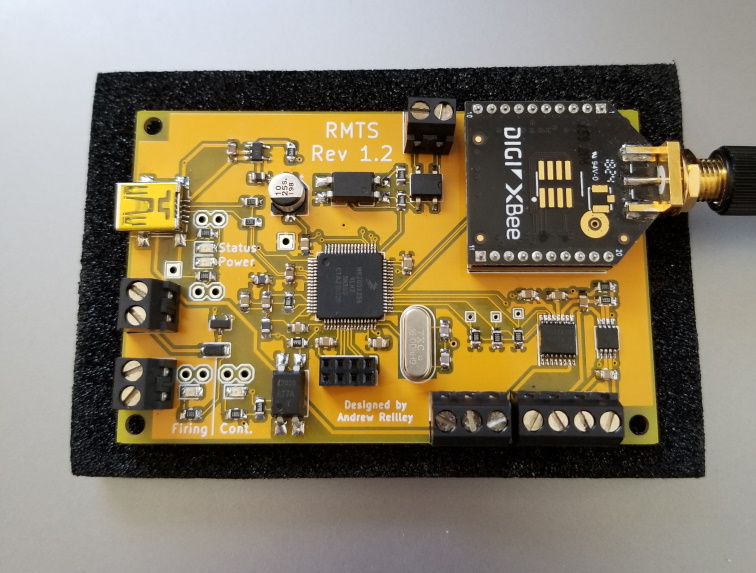

RMTS

Rocket Motor Test System is a complete test stand instrumentation system in a single compact device. Designed for solid rocket motors, it supports ignition and data collection from a pressure transducer and a load cell. The device connects to the user's computer over a radio link that is used to trigger ignition and send back results after the firing is complete.

Read More

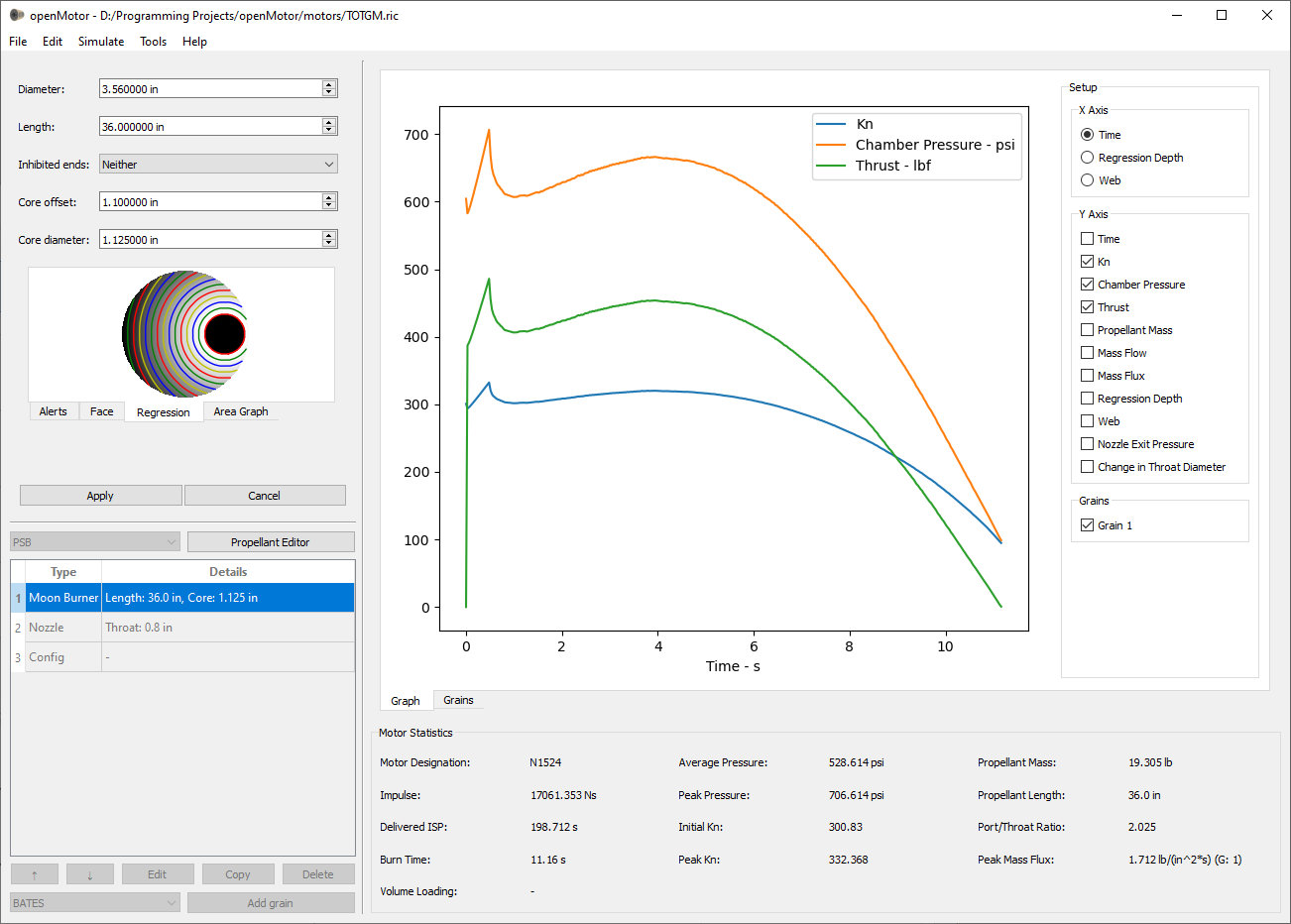

openMotor

openMotor is an open source internal ballistics simulator for solid rocket motor experimenters. It is a GUI application that takes in information about a motor's propellant characteristics, grain geometry, and nozzle configuration and estimates pressure, thrust and other characteristics to help the user iterate on their design. It is able to simulate the regression of arbitrary grain geometries.

Repository

Solid Rocket Motors

As my rocketry hobby turned into an obsession, the scope of my interest (mostly) narrowed to propulsion. While working with the MIT Rocket Team I designed, built, and fired solid rocket motors up to 6" in diameter that produced as much as 3,000 lbf of thrust. Since then, I have continued to develop my skills in this area with new formulations and designs.

Read More

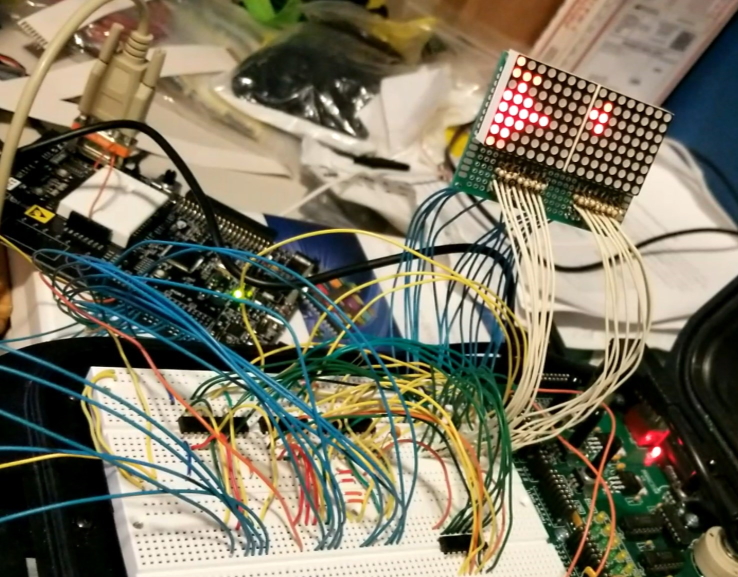

Tetris

I took MIT's 6.115 microcomputer project laboratory in the spring of 2018. My final project for the class was to build a version of Tetris entirely in 8051 assembly. The code for the project is located on my github.

Repository

Emulators

After taking 6.115 I realized that I had a decent understanding of how 8 bit microprocessors work and decided to apply this knowledge by programming an emulator for the original gameboy. I started in python and got to the point where the emulator could display the Tetris splash screen when I noticed that performance was going to be an issue. Rather than refactor, I started to convert the code to Rust but haven't had time to finish it yet.

Python RepositoryRust Repository

Rocketry

Amateur rocketry has been a passion of mine since 2010 and I have constructed and flown dozens of rockets in the past decade. I have L3 certifications from the NAR and TRA and attend launches whenever I can.

Read More

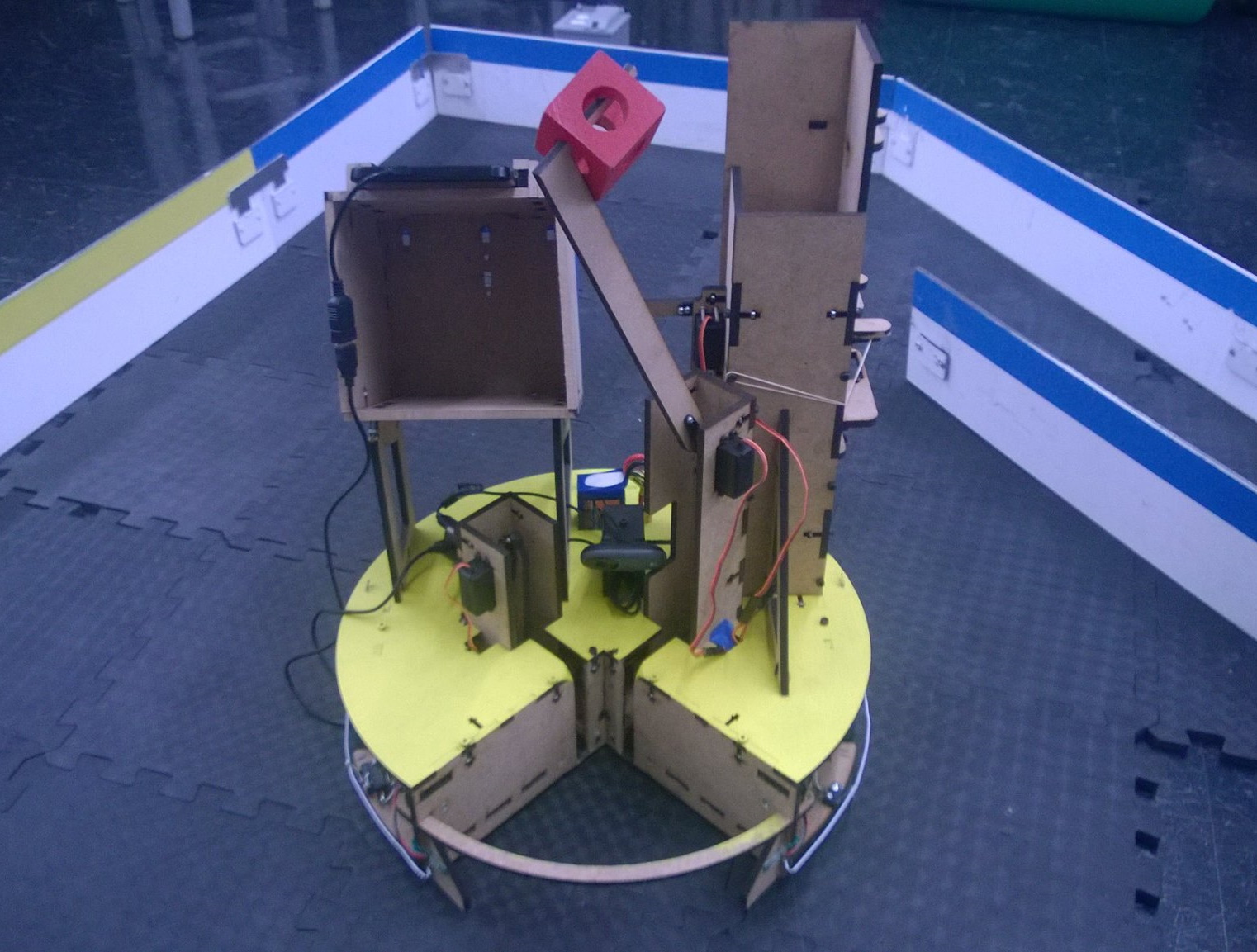

MASLAB

I spent much of January 2016 working on a robot for MIT's Mobile Autonomous Systems Lab, which is a competition that gives teams a month to build autonomous robots. The robot had to locate stacks of cubes in the arena and restack them by color. I mostly worked to design and implement our computer vision system. My team took first place in the competition, and details about our design can be found on our team wiki.

WikiRepository

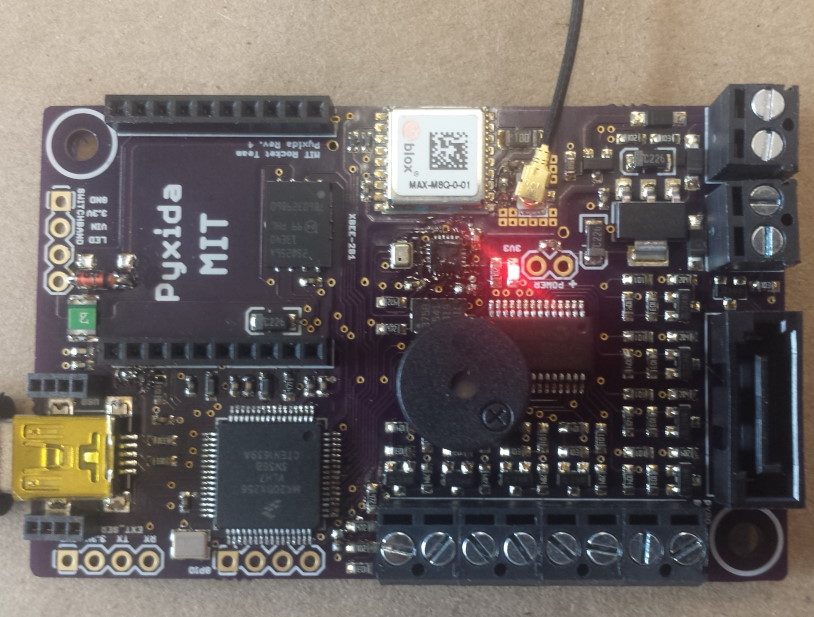

Pyxida

Pyxida is the flight computer that I helped MIT's rocket team develop as the lead of the Avionics subteam. It estimates the location and orientation of a rocket and uses this information to trigger flight events while also transmitting it to a ground station.

Fractals

I've built a few tools for generating fractals. My last foray into this field was a fractal renderer that uses the parallel nature of GPU computation to rapidly render Mandelbrot and Julia sets. The calculations are done on the GPU in a shader, so the host program only needs to direct the user's inputs to the graphics card. The properties of the fractal are broken out to the user, including the equation that is iterated, which is modified at runtime by recompiling the shader.

TDML

I wrote a basic 3D game engine in C++ using OpenGL back in highschool. This was the project that I used to teach myself OpenGL, and the original version was written using immediate mode and the fixed function pipeline. As the project progressed, I transitioned it to use modern OpenGL features such as VBOs, VAOs, and shaders. I've learned a lot since I developed TDML, but the repository for the project is still hosted here for historical purposes.

Repository